Market research best practices help teams collect more accurate data, ask better questions, and turn findings into decisions that actually hold up. The difference between research that drives strategy and research that collects dust usually comes down to how the process is designed, not how much budget was spent. This guide covers nine practices that separate reliable market research from the kind that misleads.

Short on time? Jump straight to the specific section:

What Makes Market Research Reliable (and What Doesn’t)

Market research is only as good as the process behind it. Even well-funded studies can produce misleading results if the methodology is flawed, the data is dirty, or the questioning is too narrow. Reliable market research has three things in common:

- it uses methods appropriate to the question being asked,

- it produces data that accurately represents the audience being studied,

- and it’s structured to catch its own blind spots.

That last point matters more than most teams realize. Research blind spots, assuming consumers think what you expect them to think, accepting survey data at face value, or asking closed questions that constrain the answer, can silently shape your conclusions before you’ve done any analysis. The best market research strategy isn’t just about asking the right questions. It’s about building a process that resists the errors you don’t know you’re making.

Why Getting Market Research Right Matters More Than Most Teams Realize

Poor market research doesn’t just fail to add value; it actively creates risk. Decisions made on the basis of bad data are often worse than decisions made on instinct, because they carry a false sense of validation. There are three specific ways this plays out in practice.

- First, it creates costly commitment. When flawed research supports a product launch, a positioning shift, or a pricing decision, teams commit resources based on an inaccurate picture. The cost of correcting course mid-execution is almost always higher than the cost of doing better research upfront.

- Second, it compounds across the organization. Market research findings tend to travel. They get cited in strategy decks, used to align stakeholders, and referenced in budget conversations. When the underlying data is weak, the impact multiplies.

- Third — and most insidiously — data quality problems are largely invisible. Unlike a broken dashboard or a failed campaign, bad survey data often looks fine on the surface. Fraudulent respondents, inattentive participants, and poorly structured questions all produce data that passes a basic review. The errors are buried in the numbers. GroupSolver’s research on when bad data costs real money explores exactly how that plays out and what it actually costs.

9 Market Research Best Practices That Deliver Better Results

1. Combine Multiple Research Strategies

There are countless ways to conduct market research, but not one of them is comprehensive enough to use as your only research strategy. To get a well-rounded view of your customer base, the market you’re competing in, and so on, you need to use several types of research. These could include:

- Surveying your target consumers

- Reviewing existing publicly available research about the size and attributes of your market, as well as your target consumers and their buying habits

- Analyzing your competitors’ performance and their marketing strategies

- Evaluating your own data from previous products or marketing campaigns

- Assessing the data from your business’s site to understand what type of traffic you’re getting and who you’re missing

These are merely a few examples of the types of market research you should be conducting and pooling together to get the information you need. Different methods surface different signals. Quantitative research shows you what, qualitative research shows you why, and behavioral data shows you what people actually do, regardless of what they say. No single source gives you the full picture.

For a breakdown of tools that support multi-method research, see GroupSolver’s overview of the best market research tools.

2. Make Your Research Process Ongoing

One of the most common mistakes among teams that aren’t sure how to conduct effective market research is to assume that market research is ever “done.” Your target demographic is constantly changing in their opinions and buying habits, especially when there are major world changes. You need to revisit and redo aspects of your market research on a regular basis to see how your target market has changed so you can adapt. You can stay on top of this by conducting market research surveys at consistent intervals to maintain a pulse on your target audience.

Tracking studies — research conducted at regular intervals with the same or similar questions — are especially valuable here. They let you see directional change over time rather than just a snapshot, which is what you need to catch shifts in perception before they affect your bottom line.

3. Research Outside Your Expected Market

You’re creating your product with a specific type of consumer in mind, so many brands make the mistake of only researching their market of expected consumers. If you expand your market research to also survey other groups of people, though, you may discover that they have use cases for your product that you didn’t expect.

For example, if your product is a scheduling tool designed for corporate employees, you might discover that it also satisfies a need for stay-at-home parents to balance their kids’ activity schedules and social calendars. You will never know if you don’t ask.

Expanding research scope also guards against market tunnel vision, the tendency to optimize for your current customer while missing adjacent audiences that could represent meaningful growth.

4. Use Open-Ended Questioning

Surveying potential consumers is a major cornerstone of market research, but if you’re like most brands, the way you conduct surveys could be holding back your research. If you’re using surveys with multiple-choice questions, you aren’t getting consumers’ true opinions; you’re getting their closest choice out of the options you have provided. That can be downright inaccurate.

Instead, use a survey approach that captures open-ended responses. You’ll be able to find out what consumers actually think rather than their closest guess, and you won’t be inadvertently clouding their thought process with your pre-written multiple-choice answers.

The practical challenge with open-ended questions has always been analysis at scale, but that’s precisely where modern AI-powered tools have changed the game. What used to require weeks of manual coding can now be processed, themed, and quantified in a fraction of the time. (More on that in Best Practice 9.)

5. Conduct Research at Multiple Depth Levels

Let’s say you’re doing market research for a new product you want to launch. You use surveys or focus groups to get consumers’ impressions of the product, and the results aren’t positive. The problem now is that you don’t know where you went wrong. Was it the execution of the product? Or were you entirely wrong about the problem your product was meant to solve in the first place?

This is why you should approach market research from multiple depths. Start by researching the problem you’re trying to solve for consumers to see if it’s even a problem at all. Then, research the general concept of your solution to the problem and whether it’s on the right track. Finally, research the perceptions of the finished or nearly finished product to see if you’ve executed your solution well. This lets you see exactly where you might have gone wrong, so you can adjust before it becomes expensive.

6. Find Out Where You Stand

Don’t assume that people perceive your brand the way you expect them to, or in accordance with the brand identity you’ve been trying to build. If your market research is for an existing business or brand, you can only shift perceptions if you know what they currently are. That’s why one of the most consistently underused market research best practices is simply asking your target consumers what they actually think about your brand, not just whether they’re aware of it.

Brand perception research often surfaces gaps between intended positioning and actual perception that are completely invisible from the inside. Understanding that gap is the prerequisite for closing it. GroupSolver’s brand perception research tools are designed specifically for this kind of open-ended perception mapping. And for a practical framework on how to approach it, this guide on understanding what customers really think about your brand is a useful starting point.

7. Detect Bad Survey Responses Before They Reach Your Analysis

This is one of the most overlooked steps in market research and one of the most consequential. Fraudulent respondents, bots, and inattentive participants don’t announce themselves. They fill in your survey, pass a basic review, and end up in your dataset looking exactly like valid responses.

The most common types of bad survey responses include:

- Speeders: Respondents who complete the survey far too quickly to have read the questions properly

- Straight-liners: Respondents who select the same answer for every item in a rating scale

- Random clickers: Respondents providing inconsistent or incoherent answers across the survey

- Fraudulent or duplicate entries: Particularly common when using online panels with limited verification

The impact compounds quickly. Even a 10–15% contamination rate in your dataset can meaningfully skew directional findings, and the effect is invisible unless you’re actively looking for it. The fix is to build detection into your process, not bolt it on after collection is complete. This means including attention checks, monitoring response time distributions, flagging inconsistent answer patterns, and reviewing open-ended responses for gibberish or copied text.

GroupSolver’s data quality resource covers how automated quality detection works in practice.

8. Monitor Survey Data Quality Throughout the Field Period

Related to detection, but distinct: monitoring data quality isn’t a post-collection task. It’s an active process that should run while your study is still in the field.

If a panel source is delivering low-quality responses, you want to know on day two, not after you’ve collected 1,000 completes. Real-time quality monitoring lets you intervene: pause collection, flag a panel source, or adjust screening criteria before bad data accumulates.

Key signals to watch during fieldwork:

- Completion rate and drop-off point by source

- Average response time relative to survey length

- Open-ended response quality: Are people actually writing coherent answers?

- Inconsistency flags across answer patterns

The investment in active monitoring is modest compared to the cost of running analysis on a compromised dataset and making decisions based on the results.

9. Use AI to Analyze Qualitative Responses at Scale

Open-ended survey questions produce the richest data in market research and historically, the hardest to use. Manual coding is slow, expensive, and prone to analyst bias. As a result, many teams either avoid open-ended questions altogether or collect them and never fully analyze them.

AI-powered qualitative analysis resolves this bottleneck. Modern tools can process hundreds or thousands of open-ended responses, identify recurring themes, quantify sentiment, and surface connections across answers that would take weeks to find manually.

This doesn’t replace human judgment; it removes the bottleneck that prevents human judgment from being applied to large datasets. A researcher can spend their time interpreting and acting on patterns, rather than tagging and sorting individual responses.

For teams building their approach to AI-assisted research, GroupSolver’s guide on trusting and applying AI in market research is a practical read on where AI adds value and where human oversight still matters.

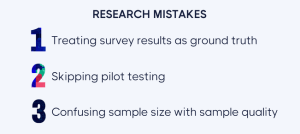

Three Research Mistakes That Quietly Undermine Your Results

1. Treating survey results as ground truth

Survey data reflects what respondents were willing to say, in the context of the questions you asked, at the moment they took the survey. That’s useful, but it isn’t certain. Pair quantitative survey findings with qualitative exploration before committing major decisions to a single data point.

2. Skipping pilot testing

Launching a survey without a small pilot run is one of the most common sources of avoidable error. Questions that seem clear to the researcher are often interpreted differently by respondents. A 10-person pilot test can catch ambiguous wording, confusing scales, and structural problems before they contaminate your full dataset.

3. Confusing sample size with sample quality

A large sample of the wrong audience or a large sample polluted with fraudulent responses produces confident-sounding but unreliable data. Sample quality matters more than sample size. Define your target audience precisely, use appropriate screening questions, and invest in the quality controls described in survey Best Practices 7 and 8 above.

Key Takeaways

- Market research reliability depends on methodology and data quality, not just budget or sample size

- Combine multiple research approaches; no single method gives a complete picture

- Research is a continuous process, not a one-time project

- Open-ended questions surface what consumers actually think; multiple choice gives you their closest guess from a list you wrote

- Research at multiple depth levels so you can pinpoint specifically where something went wrong

- Knowing your current brand perception is the prerequisite for shifting it

- Bad survey responses are common and invisible without active detection. Build quality checks into the process, not the cleanup

- Data quality monitoring should happen during fieldwork, not after it

- AI-powered qualitative analysis makes open-ended insights actionable at a scale that manual coding never could

FAQ

What are market research best practices?

Market research best practices are the methods and processes that improve the accuracy, reliability, and usefulness of your research. They include combining multiple research methods, using open-ended questioning, maintaining ongoing research programs, actively detecting bad survey responses, monitoring data quality during fieldwork, and applying AI tools to analyze qualitative data at scale, giving you findings you can actually act on.

How do you ensure data quality in market research surveys?

Data quality requires attention at multiple stages: screening participants before the survey, including attention checks within it, reviewing response patterns during fieldwork, and cleaning the dataset before analysis. Automated tools can detect speeders, straight-liners, and fraudulent entries that would otherwise pass a basic visual review and corrupt your results.

Why are open-ended survey questions better than multiple choice?

Multiple choice questions constrain respondents to answers you’ve already imagined. Open-ended questions let participants express what they actually think, in their own words, without your pre-written options framing their response. The historical tradeoff — harder to analyze at scale — has been largely resolved by AI-powered qualitative analysis tools.

How often should you conduct market research?

For most businesses, some form of market research should be running continuously. Brand tracking studies typically run quarterly. Product or concept research is conducted at key decision points. Customer perception surveys can run on a rolling basis. The goal is a consistent pulse on your audience, not occasional snapshots taken months apart.

What is the biggest mistake companies make in market research?

Treating survey data as ground truth without validating the quality of the underlying responses. Even well-designed surveys can produce misleading results if the respondent pool contains fraudulent or inattentive participants, if questions were misinterpreted, or if the sample doesn’t accurately represent the target audience. Confidence in your data has to be earned, not assumed.

Getting market research right isn’t just a methodological exercise; it determines whether the decisions your team makes are grounded in reality or built on noise. The nine practices in this guide address both layers: the strategic (what to research, how broadly, how often) and the quality layer (how to make sure the data you’re collecting is actually trustworthy).

The two have to work together. If anything has shifted in market research over the last few years, it’s this: the tools available to detect data quality problems and analyze open-ended responses at scale have improved dramatically. Teams that take advantage of that shift produce better insights, faster, with more confidence. Those that don’t are still relying on processes designed for a different era.

Stay curious. Ask why and make sure the data you’re using to answer that question is worth trusting.